The Mandelbrot set, like most fractals, requires a lot of computations to be drawn. That’s why most programs on the Commodore 64 and other classic computers are quite slow and may require even hours to draw this fractal. Of course, slow programs usually take much care for accuracy, and they end up showing off really detailed images. Sometimes, programs are slow simply because they are coded with BASIC. An interpreted BASIC – like the one on the Commodore 64 – offers the ability to code the Mandelbrot set algorithm with ease and accuracy (thanks to its floating point routines), but sadly speed is just something it can’t bless us with.

Another option is to use assembly language and taking advantage of the built-in floating point routines of the BASIC interpreter. Those can be called from machine language and they allow for accurate floating point calculations. But still, accuracy trades for speed here. And since I wanted to come up with something not very accurate but fast, I had to go for another option.

My choice was to use integer numbers. When using integers to replace decimal numbers, an offset can be used to hold the information of decimal digits. This is called fixed point math. This way, computations are much faster than by using floating point numbers.

I didn’t use heavily optimized multiplications routines. I just used standard bit-shifting routines, but I did take care of the range of numbers. 8 bit numbers are faster to multiply than 16 bit ones, so I used different multiplication routines. Numbers are 16 bit signed, so the program makes use of the two’s complement form for calculations, and multiplication routines take signs into account.

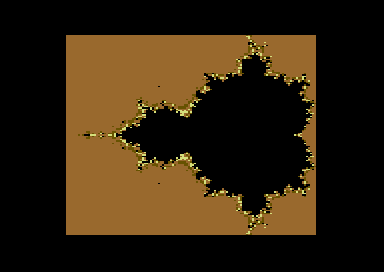

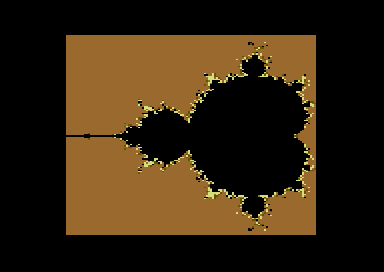

The following program draws the Mandelbrot set in four different colors (including the black color for Mandelbrot set points) using multicolor bitmap mode. Two versions are provided: the standard one, which computes the whole fractal, and the mirrored one, which is faster as it only computes one half of the fractal image, using the same approach of this program.

(Beta versions)

DOWNLOAD: Mandelbrot set, mirrored (C64 assembly version executable)

DOWNLOAD: Mandelbrot set, mirrored (C64 assembly version source code)

DOWNLOAD: Mandelbrot set, not mirrored (C64 assembly version executable)

DOWNLOAD: Mandelbrot set, not mirrored (C64 assembly source code)

The mirrored version completes execution in 1 minute and 36 seconds, while the unmirrored version takes 3 minutes and 10 seconds to complete. The fractal is not very detailed nor perfectly accurate, but still, it does show the behaviour of many points. And the points near the black areas show some nice shading.

Twenty iterations have been used for computations, just like the C64 BASIC versions that can be found in the previous articles. An offset as small as 64 has been used for most integer math calculations. It can’t provide much detail for the fractal image, but it does allow the program to be fast.

Diverging points which are far from the Mandelbrot set have been plotted using the same color, because the limited accuracy of these small integer numbers do not allow for a perfect representation of all diverging points (the small offset used implies that some numbers are not able to grow fast enough during iterations). But still, I have checked these areas and the behaviour is mostly correct. Overflow doesn’t seem to happen either.

Although technical details will be discussed later on, a little technical note may be in order now. To compute the product 2*R*I, 8 bit multiplication is used when numbers are small enough. This way, computations gain a lot of speed (16 bit multiplication is much slower). Still, to keep the factors in the 8 bit range on this case, the product must be calculated in the order: (R*I)*2. At this point, instead of multiplying the product (R*I) by two, then applying the offset value 64 (like in the 16 bit multiply routine), we can just divide R*I by 32. Although in everyday math the way factors are arranged doesn’t change the result, in this case we have to pay a little loss of accuracy. In facts, we have a couple of fake Mandelbrot set points in the picture. But, the speed advantage obtained makes this little imperfection more than acceptable. Still, even with the 16 bit multiply routine, those two points – even if they actually become non Mandelbrot set points – do have different colors than the other diverging points nearby. I think that 24 bit math is capable to fix the issue, but I just wanted to keep the program fast with simple code, without having to resort on ultra optimized multiply routines.

Still, a better result can be achieved by forcing the 16 bit multiplication all the time and by using shading on diverging points only for k < 12 (k is the number of iterations). Now fast diverging points have the same colors.

This program contains many interesting things as it requires dealing with signed numbers and the use of an integer offset on iterative computations. The following explanations are also intended as a complement to this article.

Dealing with signed integers – addition and multiplication (two’s complement form)

Signed numbers can be represented by using the two’s complements form. We have already used them in the assembly program “Sphere”.

Eight bit signed numbers are in the range -128… -1, 0… +127. 0 has no sign in theory but it is regarded as a positive number in computing practice.

Positive 8 bit numbers are those in which bit 7 is not set. In negative numbers, bit 7 is set instead. So, $ff equals -1 for instance. That’s because -1 is obtained by counting down one step from 0. And if you perform an DEC from 0, you just obtain 255, which we have decided to see as -1. Actually, the two’s complement form is just a convention.

Similarly, the number -2 is obtained by counting down from 0 two times, so it is represented by the number 254. Again, perform two DECs from 0, and you will get 254.

So, 254 is the two’s complement form of -2, and “2” is the module of this number. How do we get the module?

First, consider that if you count up one time from 255, you should get 256. But, with 8 bit numbers you just wrap up to 0. But, in a way, we can see 0 as 256 in this case. Actually, to get the module of the two’s complement form number 255, you just subtract it from 256 ,which is our zero now:

256-255 = 1, bit 7 is set to one in 255, so: 255 is a negative number, its module is 1, so: 255 equals -1.

As a further example, the two’s complement form negative number 240 equals -16 (256-240 = 16, bit 7 is set so the number is negative).

Numbers where bit 7 is set are quite easy to spot. Since 2^7 equals 128, negative numbers are those equal to or greater than 128. Positive numbers are the remaining ones, that is those from 0 to 127.

In the Mandelbrot program, we need 16 bit signed numbers. We just have to extend the previous statements to 16 bit.

A 16 bit unsigned number ranges from 0 to 65535 (65536 different values). If we need signed numbers, bit 7 of the high byte (bit 15 in the two-byte pair) will tell us the sign of numbers. So, 16 bit signed numbers will range from -32768 to -1, and from 0 to 32767 (as 2^15 is 32768).

So, numbers from 0 to 32767 are positive. In facts, bit 15 is not set in those numbers (2^15 = 32768). These numbers are just less than 32768. Numbers from 32768 to 65535 are negative instead, as bit 15 is set on them.

Now, 65536 can be seen as our new zero. It acts just as 256 in 8 bit numbers. So, if we look at the number 65100 (two’s complement form), we can calculate its module as follows:

65536 - 65100 = 436

So, 65100 is the two’s complement form of -436.

Again, please note that numbers are always stored as unsigned. It’s just that we are deciding of seeing them as signed numbers and are willing to use these conventions.

Additions work quite straight. You can just add two’s complement numbers together, positive and negative. For instance, suppose we want to add -20 and 100 together (8 bit numbers). We just have to convert -20 in two’s complement form, than add it to 100 (as it is positive, it just needs no conversion).

- 20 => 256 - ABS(-20) = 256 - 20 = 236 236 is the two's complement form of -20

Now, let’s add 236 and 100 together. As we are dealing with 8 bit numbers, if we count up from 236, numbers will wrap around when we reach 0 (which we may see as 256). So, if we add 20 units to 236 we get 0. Now, since we had to add 100 units, we still have 100-20 = 80 units to add. So, we can add the remaining 80 units to 0 obtaining 80, which is our result.

In fact: -20 + 100 = 80.

The 6502/6510 processor just counts this way, so we have no concerns. We just have to code additions as usual. The only thing that changes is overflow check. Despite of using the C flag, we have to use the V flag on the higher byte this time.

As an easier example, suppose we want to add -1 and 1 together. Do we get 0? Let’s see it.

- 1 = 255 in two's complement form. 255 + 1 = 0 (which may be seen as 256).

Multiplication only works with unsigned numbers. So, in general, a signed multiplication routine must take the module of numbers and multiply them. So, the sign bit of each number must be checked before multiplication is performed, so that the sign bit of the product can be set accordingly. The instructions BPL and BMI are available (just have a look at the code).

For instance, if we have to multiply two signed numbers A and B, with result C, the sign of the product must be assigned as follows.

A * B = C + + + - + - + - - - - +

Suppose we use some memory locations to store the signs of the factors. Let’s say that 0 means positive, and 1 means negative. We can obtain the sign of the product by simply EORing together the signs of the factor. Just have a look at the truth table of the EOR operator, and provided that 0 means positive and 1 means negative, it just works as the above sign rules:

A B EOR PRODUCT SIGN 0 0 0 + 1 0 1 - 0 1 1 - 1 1 0 +

This is just what you can find in the code of one of the two signed multiplication routines:

; sign of product evaluation lda multiplicand_sign8 eor multiplier_sign8 beq skip_product_complement8 ;if product is positive, skip product complement

Still, if we just have to multiply a signed number by two using the ASL instruction, then we can directly work on signed numbers. The program does this when computing the product 2*R. In facts, if we shift the negative number -20 towards the left one time, the bit sign goes on the C flag, and the higher bit of the two’s complement form number is just guaranteed to replace the sign bit with a 1, keeping the number negative:

S

[1][1][1][0][1][1][0][0] <---0

C<-1 [1][1][0][1][1][0][0][0]

^sign

So, -20 in two’s complement form is 236 decimal, or 11101100 binary.

If we shift it left one time, we get: 11011000, which is: 216 decimal (two’s complement form). Do we get -40, which is the right result?

256-216 = 40, module of the negative number.

So, we actually get -40, as required. That demonstrates that ASL can successfully multiply by two negative numbers. That’s why it is called arithmetic shift left: it can preserve signs.

Overflow may happen of course, but that’s a whole different story.

On the contrary, LSR cannot preserve the sign. If we try to shift right a signed negative number, the sign bit will sadly become positive. That’s why LSR is called a logic shift. It just can’t handle signed math.

On the next part, we will talk about the fixed point math used in the program (that is, using integers to simulate decimal numbers), setting up a table of squares (used to quickly compute the terms R^2 and I^2 when R and I are 8 bit numbers ) and the way to plot multicolor points.

Love it! For the uninitiated, it is like a secret club! Thank you!

nice to see someone diving deep into fast & accurate calculation

Thanks! This kind of coding did help me on understanding math better. 🙂

Hello,

Today i have updated the above source code with an time measurement subroutine and changed the variables to zero Page entries. The ZP „optimation“ in this case is huge. The calculation Time is now 9549 that means , divided two times by 60, 2 minutes and 40 seconds. 🙂

Thanks a Lot for sharing the Code.

You are more than welcome, I’m glad you optmized the code. 🙂

Very Good Code. Thanks for Sharing it. I have stored the values in ZP. This Speed up about 30 seconds.